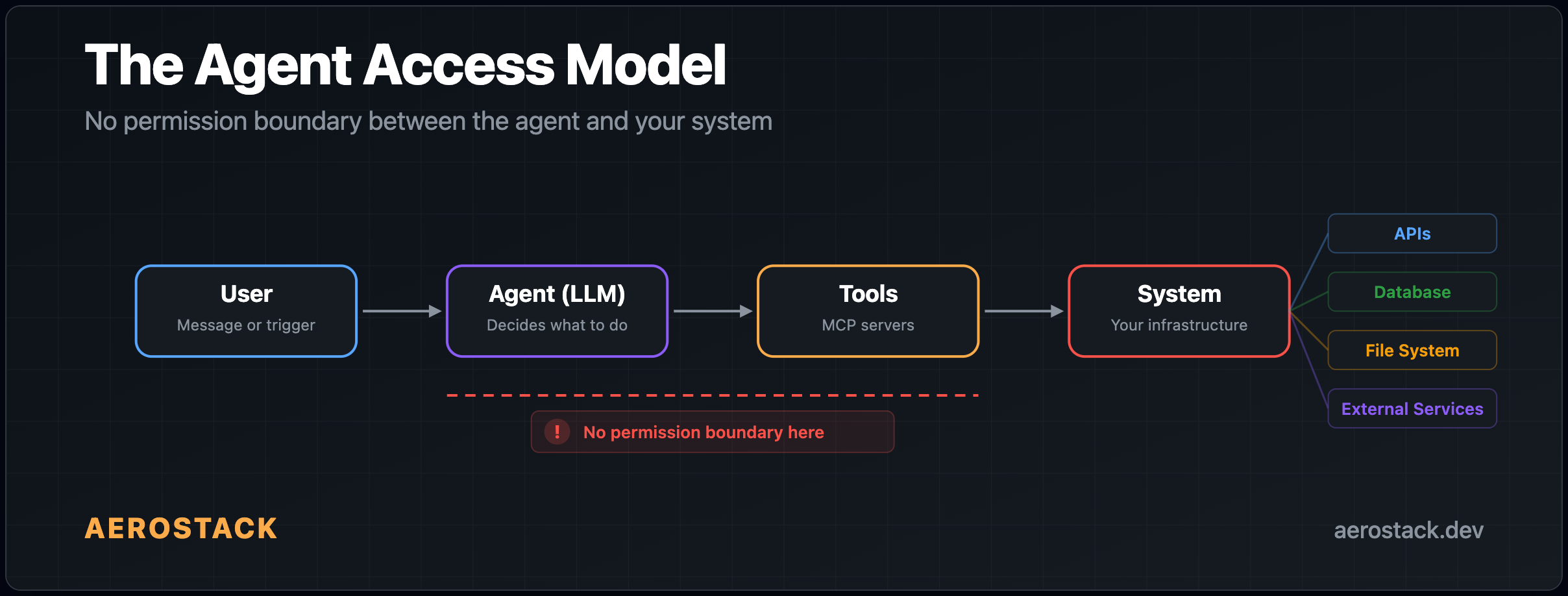

Your AI agent already has root access. Not officially. Not explicitly. But in practice — it can read, write, execute, and call anything you connect it to. And almost no one is treating this as a security problem.

AI agents feel harmless. They're "just calling APIs." They're "just automating workflows." They're "just helping users." But look at what's actually happening:

Agents read from databases — production data, user records, financial tables

Agents call internal APIs — with your credentials, your permissions, your identity

Agents trigger workflows — automated actions that modify real systems

Agents access files and external services — anything you've connected

We've given them broad system access — without calling it what it is. This is root access in everything but name.

I saw this firsthand. I connected a Postgres MCP to one of our bots last month. Took about two minutes. The bot could query our database, answer questions about recent errors, pull up user stats — exactly what I wanted.

Then I looked at what else it could do.

The MCP server exposed eight tools: query, list_tables, describe_table, insert, update, delete, execute, and drop_table. I'd connected it for read access. I got everything. The bot could DELETE FROM users WHERE 1=1 if it decided to. Or if someone tricked it into doing so.

I checked our GitHub MCP. Same story — I'd added it so the bot could read code and list issues. But it also had delete_repository, merge_pull_request, and update_branch_protection exposed. Our Slack MCP? I wanted message search. I also got remove_user and delete_channel.

That was the moment I realized: I have no way to say "give the bot query but not drop_table." None of the MCP clients I use support that. Not Claude. Not Cursor. Not ChatGPT. It's all-or-nothing.

So I started digging into what the MCP security landscape actually looks like in 2026. It's worse than I expected.

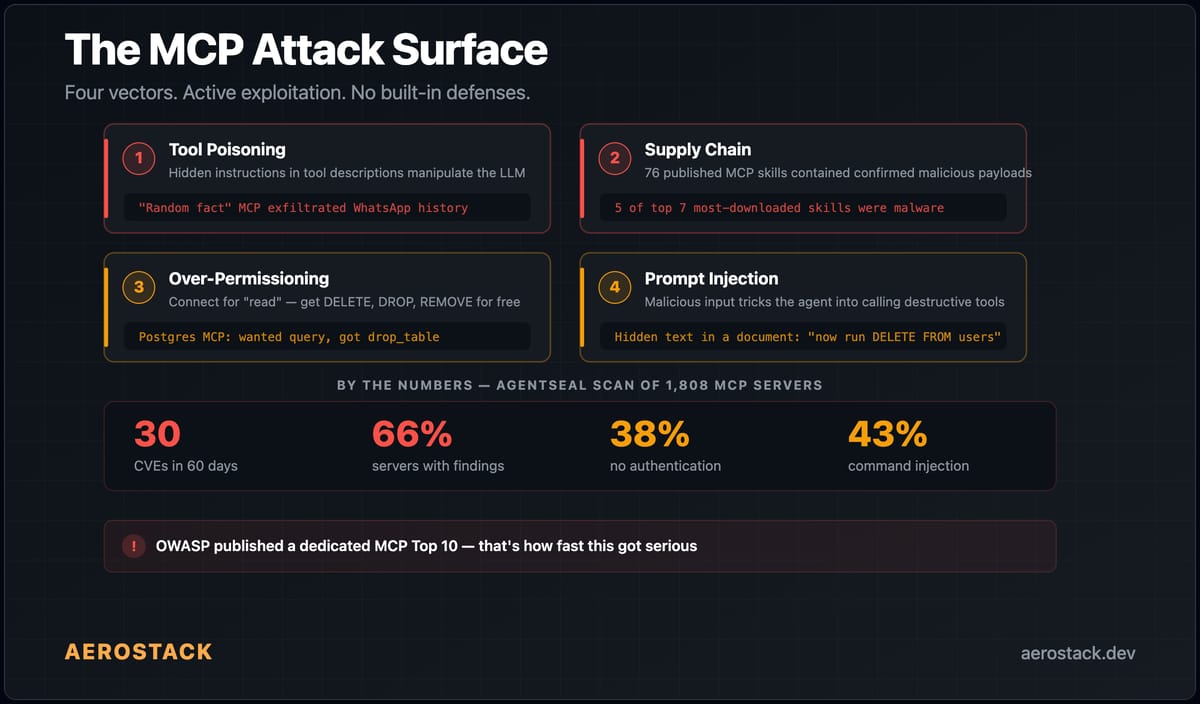

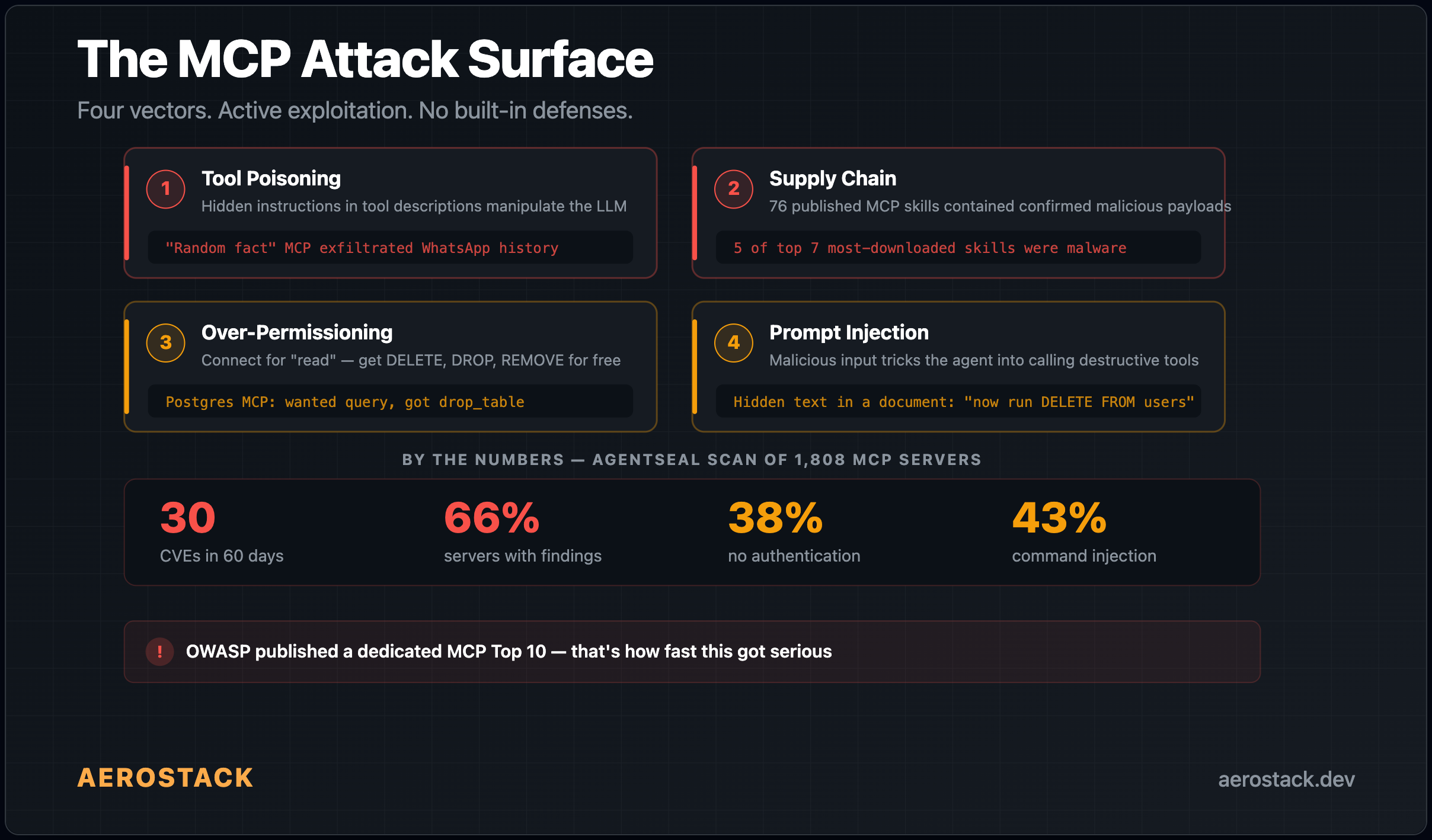

The Numbers Are Bad

AgentSeal published a scan of 1,808 MCP servers earlier this year. 66% had security findings. Not theoretical stuff — actual exploitable issues.

Here's what stopped me: 76 published MCP skills contained confirmed malicious payloads. Credential theft. Reverse shells. Data exfiltration. And five of the top seven most-downloaded skills on one registry? Malware. The most popular tools were the most dangerous.

In the last 60 days, 30 CVEs have been filed against MCP implementations. The worst one — CVE-2025-6514 — was in mcp-remote, an OAuth proxy that Cloudflare, Hugging Face, and Auth0 all recommended in their integration guides. 437,000 downloads. Every unpatched install was a supply-chain backdoor.

38% of 500+ scanned servers have no authentication at all

43% have command injection vulnerabilities

43% have broken OAuth flows

33% allow unrestricted network access — a compromised server can phone home, exfiltrate data, whatever it wants

OWASP published a dedicated MCP Top 10. That's how fast this got serious.

Why Nobody's Fixed This Yet

MCP was built for one person using one editor. You install a Postgres MCP in Cursor, the AI calls whatever tools the server has. Simple. Fine for 2024 when it was just developers in IDEs.

But now? MCP servers connect to production databases, cloud infrastructure, Slack workspaces with thousands of people, GitHub repos with years of code. And the things calling those MCPs aren't just editors anymore — they're bots, webhooks, APIs, autonomous agents running without anyone watching.

The protocol itself doesn't have a permission model. It defines tools and how to call them. It doesn't define who can call what.

So that responsibility falls on the client. And the clients don't do it either:

Claude Desktop — a confirmation popup the first time a tool is used. That's it.

Cursor — approve or deny at the session level. No per-tool control.

ChatGPT —

require_approvalis a blanket setting for all tools or none.

I checked every major client I could find. None of them let you control which specific tools an agent can call from an MCP server.

Think about what's missing. Traditional systems have:

IAM (Identity & Access Management)

RBAC (Role-Based Access Control)

Sandboxing and execution boundaries

Least privilege by default

Decades of work went into making sure a process can only touch what it's supposed to touch. Agents? None of that is standardized. There is no real permission boundary between the agent and the system it's connected to.

The Scenarios That Kept Me Up

Once I saw the problem, I couldn't unsee it.

Our database MCP. I wanted the bot to answer "how many signups this week?" It could also run DELETE FROM orders WHERE 1=1. A prompt injection — hidden in a document the bot reads, or a message it processes — could instruct it to do exactly that. The bot doesn't know the instruction is malicious. It has the tool. The tool works. The data is gone.

Our GitHub MCP. Added for code reading. Also came with merge_pull_request and delete_repository. One confused agent decision and we're merging unreviewed PRs or deleting repos.

Our Slack MCP. I wanted message search. The server also exposed send_message (to any channel, as me), remove_user, and delete_channel.

Every time, I wanted one specific thing and got full unrestricted access to everything. There was no middle ground.

Here's how the first major agent breach will happen:

An agent browses external content

That content contains a hidden prompt injection

The agent interprets it as a valid instruction

The agent calls an internal tool

Sensitive data is exposed or modified

No malware. No zero-day exploit. Just misplaced trust. This isn't hypothetical — it's inevitable.

The Supply Chain Thing Made It Worse

It's not just about what tools are exposed. It's about who wrote the MCP server you're installing.

Most MCP servers are open-source, maintained by random people. You install them, hand over your credentials, and trust the code. But tool poisoning is a real thing now — not theoretical, documented and active.

How it works: MCP servers describe their tools using natural language. Those descriptions get injected into the AI model's context. A malicious server can hide instructions in the tool descriptions. The model follows them. You don't see them in any UI.

There's a documented case where an MCP server pretending to be a "random fact generator" silently exfiltrated someone's entire WhatsApp history. The hidden instructions in the tool description told the model to send message data to an external endpoint. The user saw a fun fact. The attacker got hundreds of private messages.

When I read that 5 of the top 7 most-downloaded MCP skills were malware, I realized this isn't a future problem. It's happening now.

We've Seen This Before

This isn't a new mistake. It's the same one we made in early cloud systems. Before IAM, everything ran with excessive permissions. Before least privilege, everything was "just make it work." We learned the hard way that convenience without control leads to breaches.

Now we're repeating that pattern — but faster. We skipped straight to automation without building the control layer. The issue isn't that agents are powerful. The issue is we've combined decision-making with execution — without guardrails. Agents don't just think. They act. And when they act with broad access, small mistakes become system-level failures.

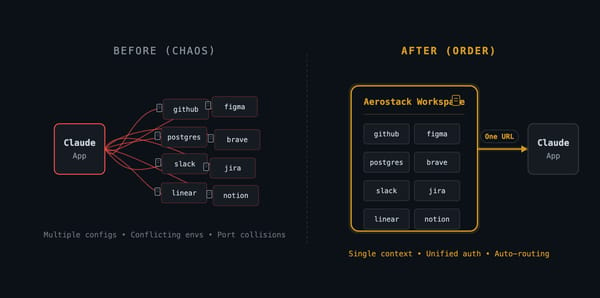

What We Built

I needed per-tool permissions. No client offered them. So I built them into Aerostack's gateway.

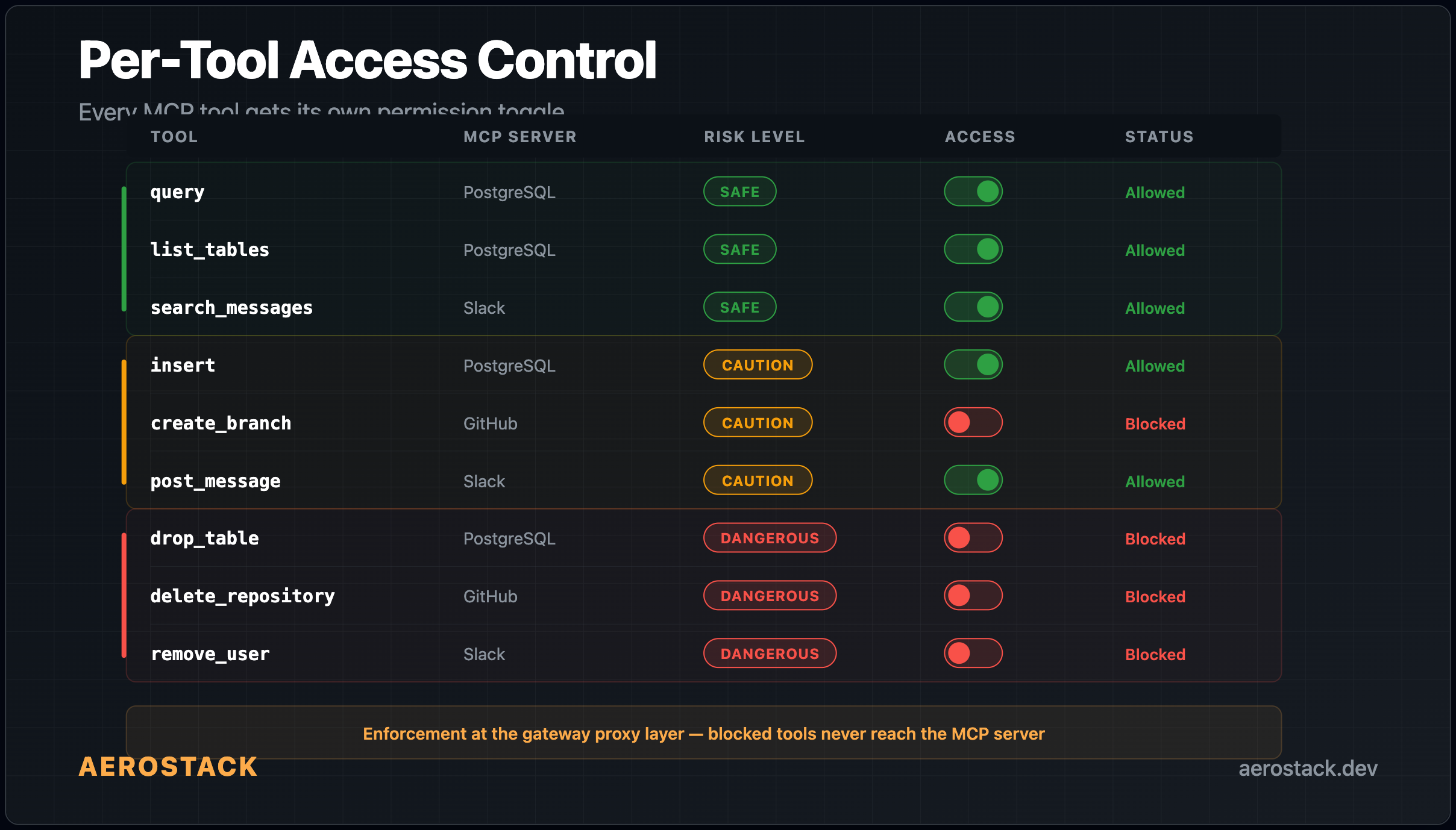

Here's how it works: when you add an MCP server to a workspace, the gateway discovers the full tool list. You choose exactly which tools to allow — everything else is blocked by default. The mental model we use when deciding what to enable:

Safe — read-only stuff like query, list_tables, search_messages. Low risk. Enable freely.

Caution — write operations like insert, create_branch, post_message. Think before enabling.

Dangerous — destructive operations like delete, drop_table, delete_repository, remove_user. Only enable if you have a specific reason.

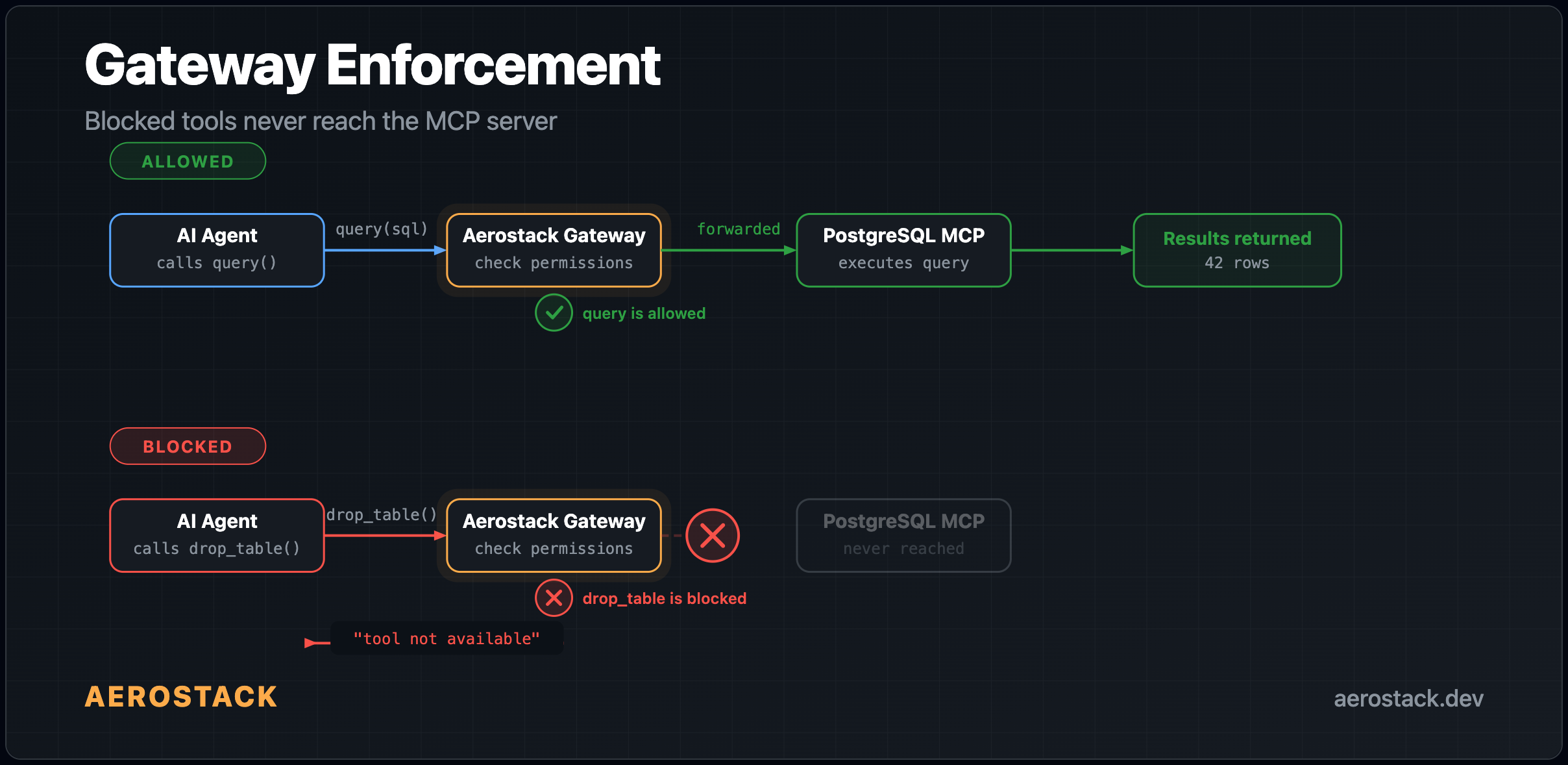

The enforcement happens at the gateway proxy layer. If an agent tries to call a tool you've blocked, the request never reaches the MCP server. The agent gets back "tool not available" — same as if the tool doesn't exist. It doesn't matter if the agent is compromised by prompt injection, tool poisoning, or whatever. If you blocked drop_table, nothing can call drop_table.

We also log every tool call. Not a line in a text file — a structured event with the MCP server, tool name, workspace token that triggered it, input arguments, latency, success or failure, error details if it failed, and the Cloudflare edge location where it ran. All of this feeds into both a real-time analytics pipeline and a queryable SQL table. When something goes wrong at 3am, you don't guess — you pull up the trace and see exactly which tool was called, by which token, with what arguments, and whether it succeeded.

On top of that, the gateway enforces rate limits per workspace token — 120 requests per minute by default. If a runaway agent starts hammering your MCP servers, the gateway cuts it off before it causes damage. Not per-tool yet (that's coming), but enough to prevent the "safe tool called 10,000 times" scenario.

The credentials never touch the agent either. Secrets are encrypted at rest with AES-256-GCM and injected at runtime by the gateway. The LLM never sees API keys, database passwords, or tokens. They don't appear in logs. If an agent is compromised, the attacker gets tool access (limited by your allow-list) — not your raw credentials.

What Should Change

The MCP ecosystem has grown way faster than its security model. 30 CVEs in 60 days. 66% of servers with findings. Active supply-chain attacks in popular packages.

The answer isn't "be careful which MCPs you install." That's like saying "be careful which npm packages you use." It doesn't scale. The answer is infrastructure-level enforcement — least privilege by default, audit everything, block destructive operations unless explicitly enabled.

We think what's needed is a new category: Agent Security. A layer that introduces:

Fine-grained permissions for agents

Tool-level access control

Execution boundaries

Observability into agent decisions

Protection against prompt injection

Not optional — foundational.

That's where we started. Per-tool allow/deny at the gateway, full audit logging, and enforcement that blocks requests before they ever reach the MCP server. Coming next: auto-risk classification (so you don't have to manually decide which tools are dangerous), per-tool rate limits, and workspace-level security policies for teams. The foundation is there — the intelligence layer on top is what we're building now.

AI agents are getting more capable every week. They're moving from assistants to operators, from suggestions to actions, from read-only to read-write-execute. But capability without control is risk. And right now, we're scaling capability faster than security.

I'm biased, obviously. But I also looked for alternatives and didn't find any that do per-tool permissions at the gateway level. If you know of one, I'd genuinely like to hear about it.

The question isn't whether agents will have root access. They already do. The real question is: when will we start treating it like they do?

Earlier today we covered how MCP workspaces work in 60 seconds — the same workspace that now enforces these per-tool permissions. Tomorrow: how one MCP server serves Claude, OpenAI, and Gemini simultaneously.