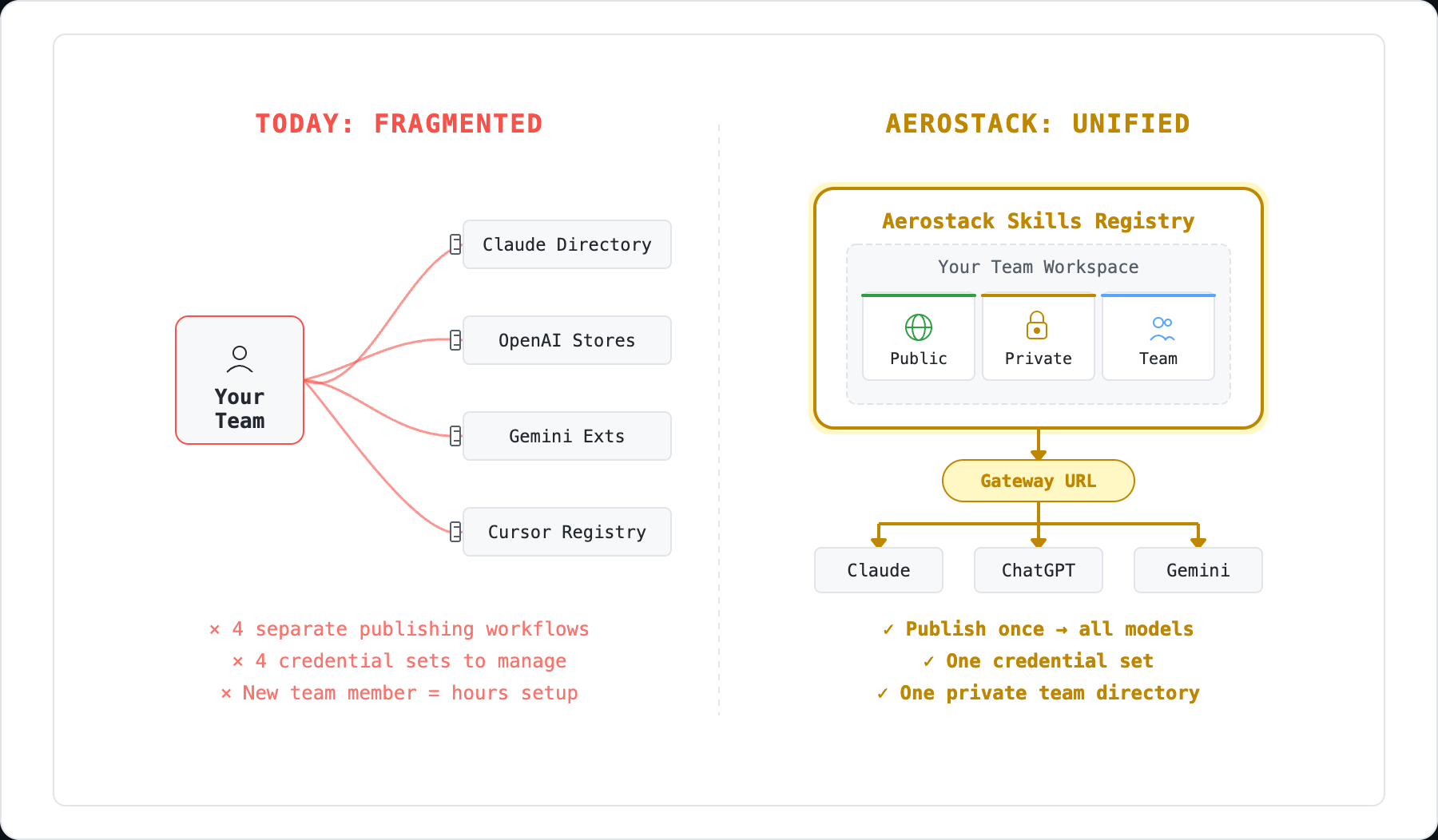

You build one MCP. It works in Claude. But your team uses Cursor. Your CI pipeline calls OpenAI. Your mobile dev uses Gemini. Three different tool schema formats. Three different integrations to maintain.

That's the problem Aerostack's workspace gateway solves.

The Multi-Platform Problem

Claude and MCP-native clients (Cursor, VS Code Copilot, Windsurf, Cline, Continue) speak MCP's JSON-RPC protocol. You define tools with names, descriptions, and typed parameters. It works.

But OpenAI uses a different format — function-calling with its own schema structure. Gemini uses yet another — tool definitions with uppercase type names and its own conventions for optional fields and enums.

The semantic information is identical. A database query tool takes a SQL string and returns rows. But encoding that tool for each platform requires translating the schema. Parameter names stay the same. Descriptions stay the same. The wrapper format changes.

If you maintain separate integrations for each platform, you're patching bugs in three places. Adding a parameter means updating three schemas. Over time, they drift. Tests multiply. The maintenance cost compounds.

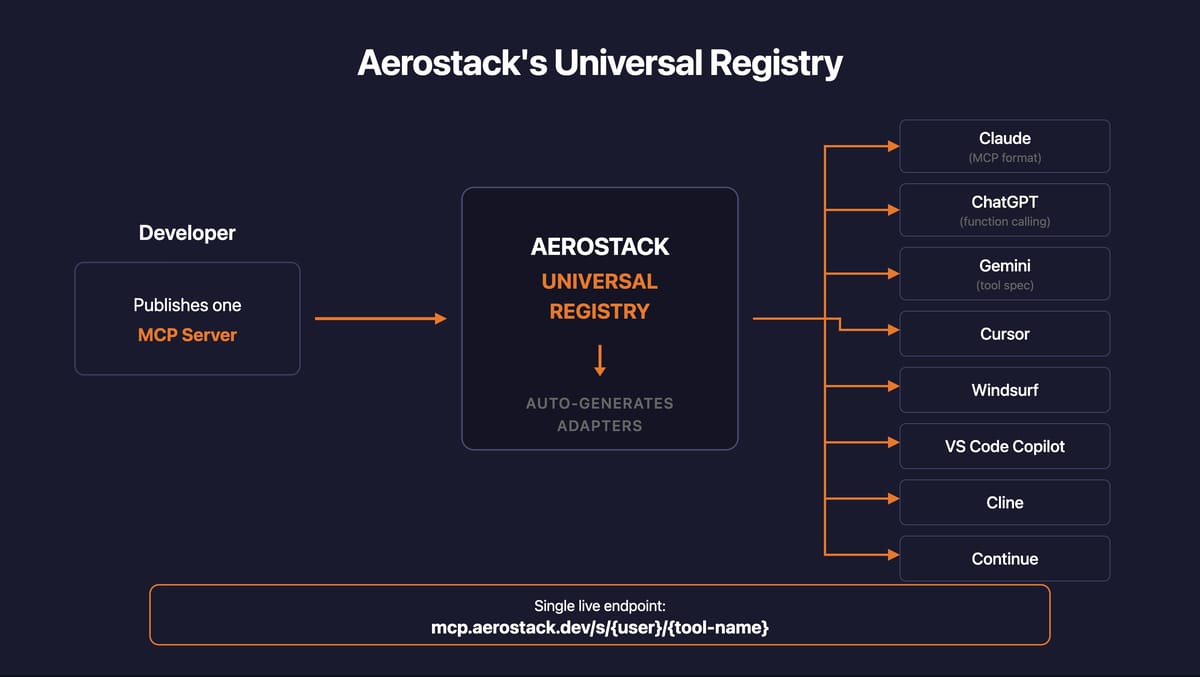

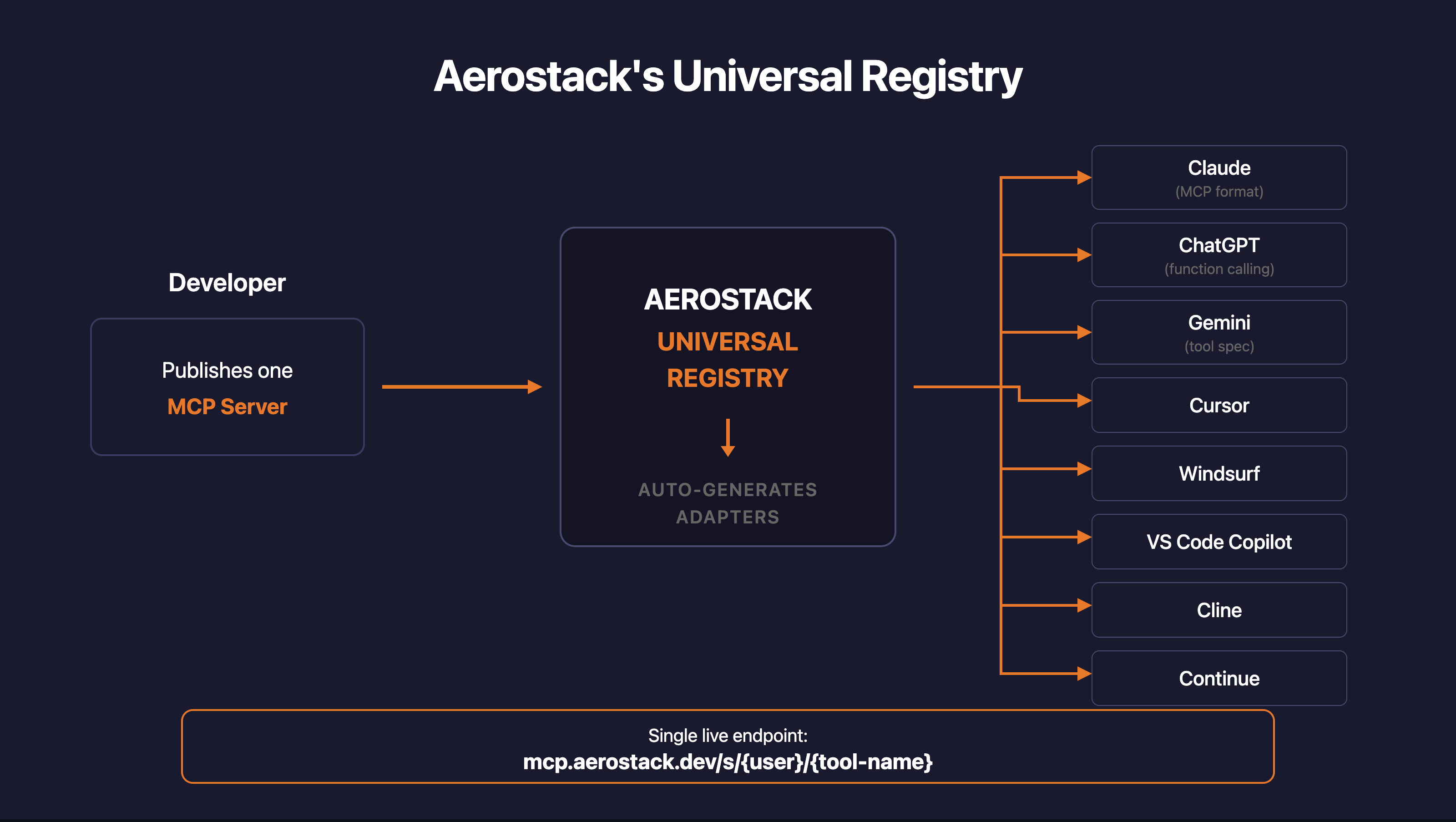

How Aerostack Handles This

When you create a workspace and add MCPs, those tools become available through the workspace gateway. The gateway serves your tools in three formats from a single workspace URL:

MCP native — for Claude Desktop, Cursor, Cline, Continue, Windsurf, and any MCP-compatible client. Standard JSON-RPC tools/list request.

OpenAI function-calling — for applications using OpenAI's API. Each tool is wrapped in the { type: "function", function: { name, description, parameters } } format that OpenAI expects.

Gemini tool definitions — for Google's Gemini API. Tool schemas are transformed with uppercase type names and Gemini's specific conventions.

Your MCPs don't change. The workspace gateway handles the format translation. One set of tools, multiple clients consuming them simultaneously.

What the Translation Looks Like

Here's a concrete example. Your workspace has a database MCP with a query tool. When different clients request the tool list, they each get the format they expect:

MCP client receives:

{

"name": "database-mcp__query",

"description": "Execute a SQL query",

"inputSchema": {

"type": "object",

"properties": {

"sql": { "type": "string", "description": "The SQL query" },

"timeout": { "type": "integer", "default": 30 }

},

"required": ["sql"]

}

}OpenAI client receives:

{

"type": "function",

"function": {

"name": "database-mcp__query",

"description": "Execute a SQL query",

"parameters": {

"type": "object",

"properties": {

"sql": { "type": "string", "description": "The SQL query" },

"timeout": { "type": "integer", "default": 30 }

},

"required": ["sql"]

}

}

}Gemini client receives:

{

"name": "database-mcp__query",

"description": "Execute a SQL query",

"parameters": {

"type": "OBJECT",

"properties": {

"sql": { "type": "STRING", "description": "The SQL query" },

"timeout": { "type": "INTEGER", "description": "" }

},

"required": ["sql"]

}

}The differences are structural, not semantic. OpenAI wraps everything in a function object. Gemini uppercases type names and handles defaults differently. The tool name, description, and parameter semantics are preserved across all three.

For a simple tool like this, the differences look minor. They compound with nested objects, enum constraints, optional vs. required fields, and platform-specific validation rules. The gateway handles all of it so you don't maintain three separate schemas.

Supported Clients

The workspace gateway has been tested with:

Claude Desktop — native MCP over JSON-RPC

Cursor — MCP support in the code editor

VS Code Copilot — Copilot Chat extension with MCP

Windsurf — IDE with MCP integration

Cline — MCP-native agent framework

Continue — IDE copilot with MCP support

OpenAI API clients — any application using OpenAI's function-calling protocol

Gemini API clients — any application using Google's tool definitions protocol

The architecture supports any client that speaks MCP, OpenAI function-calling, or Gemini tool definitions.

One Workspace, Every Client

The practical impact: your team stops maintaining per-platform tool integrations.

Your Cursor plugin connects to the workspace. Your production API calls the same workspace using the OpenAI format. Your Gemini-based mobile agent connects to the same workspace too. One set of MCPs, one set of credentials, one set of tools — served to every client in its native format.

When you add a new MCP to the workspace, every client sees it immediately. When you fix a bug in an MCP, every client gets the fix. When you rotate a credential, every client continues working. No synchronization. No separate deployments.

This is what "publish once, use everywhere" actually means. Not marketing — a single workspace URL that speaks every major AI tool protocol.

What's Next

Tomorrow we're covering Gen 3 bots — how MCP-orchestrated agents work differently from flowchart bots and RAG bots, and why giving the LLM tools instead of a decision tree changes what's possible.