Not every message needs your most expensive model.

A user asking "What's your return policy?" doesn't need the reasoning capability of your top-tier LLM. Neither does someone confirming they received an order email. But if you route every message through the same model, you're paying premium inference costs on questions a smaller model handles fine.

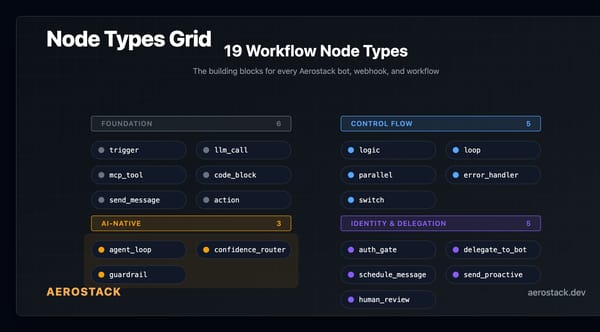

We built the confidence_router workflow node to let you route messages to different models based on complexity.

The Cost Problem

Most bots today use a single LLM for every message. Operationally simple — one model, one API call pattern. But expensive.

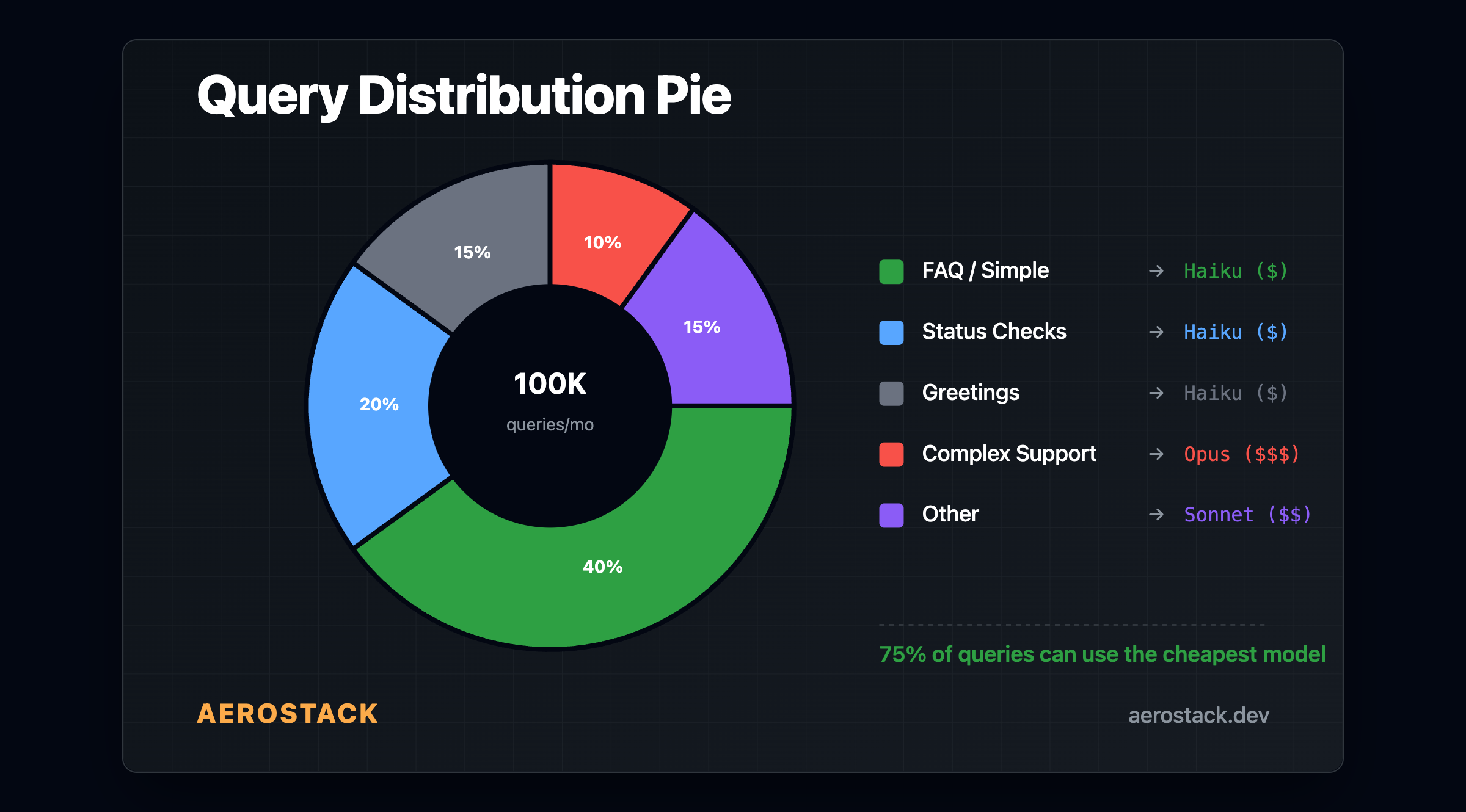

A typical bot handles:

FAQ lookups ("What's the return policy?")

Status checks ("Where's my order?")

Greetings ("Hi, I need help")

Complex queries ("Why did this charge appear twice on my card and can you refund the duplicate?")

The first three are classification and retrieval tasks. They don't need deep reasoning. The last one might need multi-step tool calls, context from multiple MCPs, and careful analysis. Running all four through the same expensive model is wasteful.

How the confidence_router Works

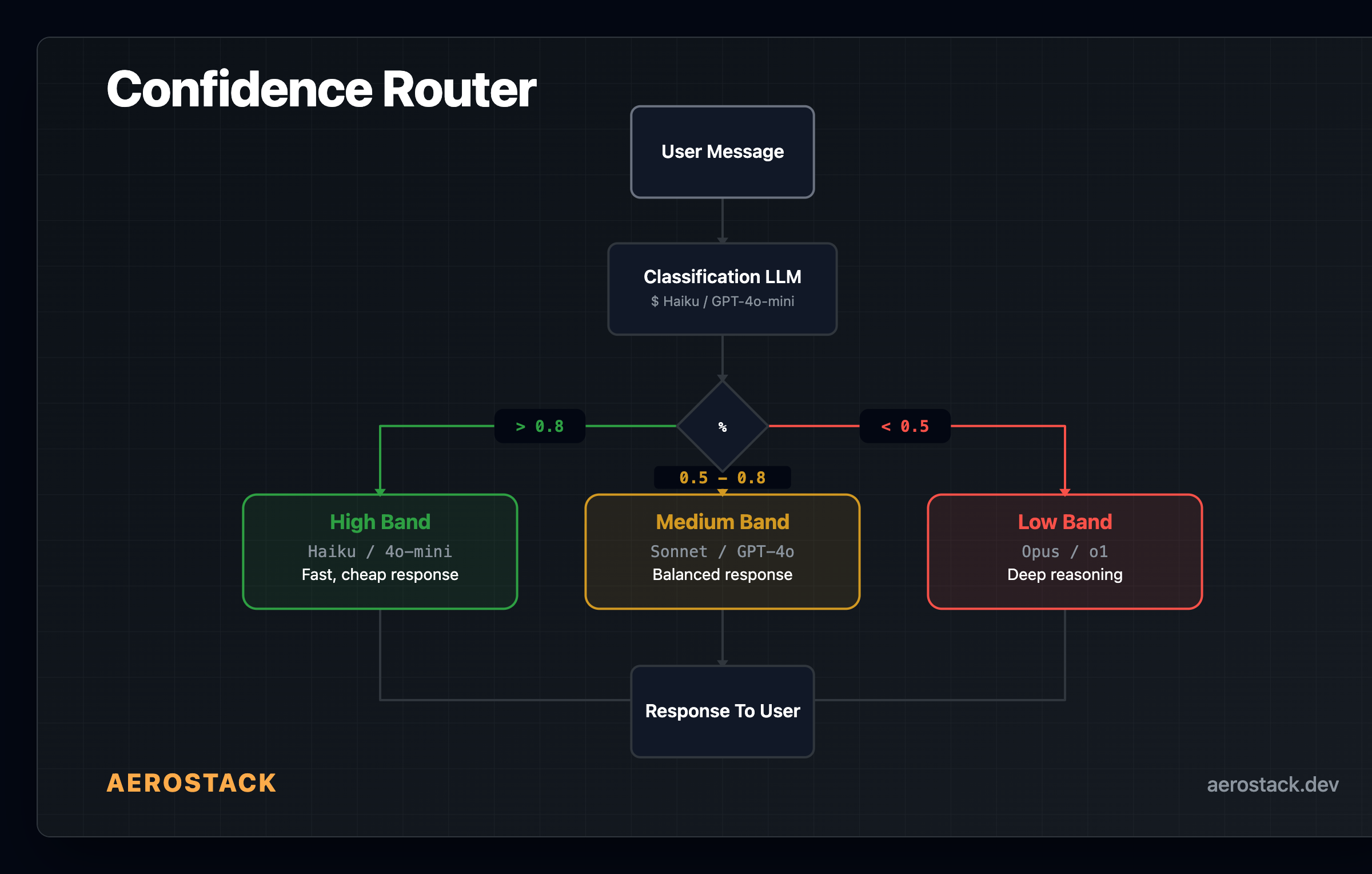

The node does two things: classify the message and route based on the result.

Classification. You define a set of intent categories — for example, ["billing", "technical", "general", "greeting"]. The node sends the message to an LLM and asks it to classify the intent and assign a confidence score between 0 and 1. The response comes back as structured JSON: an intent label and a confidence number.

Routing. Based on the confidence score, the node routes to one of three bands:

High confidence (score above your high threshold) — the message is straightforward. Route to a fast, cheap model.

Medium confidence (between your two thresholds) — needs some reasoning. Route to a mid-tier model.

Low confidence (below your low threshold) — complex or ambiguous. Route to your most capable model.

Both thresholds are configurable per workflow. The defaults are 0.8 and 0.5, but you tune them based on your actual traffic. If most queries are routing to the low band, your thresholds are too aggressive.

Important tradeoff: The classification pass itself uses an LLM call. Use a cheap, fast model for classification (Haiku, GPT-4o-mini) so the overhead is minimal. The savings come from routing the response generation to the right model, not from the classification step.

What You Configure

The confidence_router is a workflow node. You wire it between your trigger and your response nodes. Configuration:

Categories — the intent labels the classifier can assign (e.g.,

["faq", "order_status", "complex_support", "greeting"])High threshold — confidence score above which the message routes to the cheap model (default: 0.8)

Low threshold — confidence score below which the message routes to the expensive model (default: 0.5)

Model override — optionally use a specific model for the classification step itself

You then wire three outbound edges from the node — high, medium, and low — each pointing to a different llm_call node with the model and system prompt appropriate for that complexity level.

The model choices are entirely yours:

| Band | Example models | Use case | |------|---------------|----------| | High | Haiku, GPT-4o-mini | Simple FAQ, greetings, status checks | | Medium | Sonnet, GPT-4o | Moderate reasoning, context retrieval | | Low | Opus, GPT-4o | Complex multi-step reasoning, tool orchestration |

These are examples, not prescriptions. Use whatever models your workspace supports — Claude, GPT-4o, Gemini, Groq, Workers AI, or your own via BYOK.

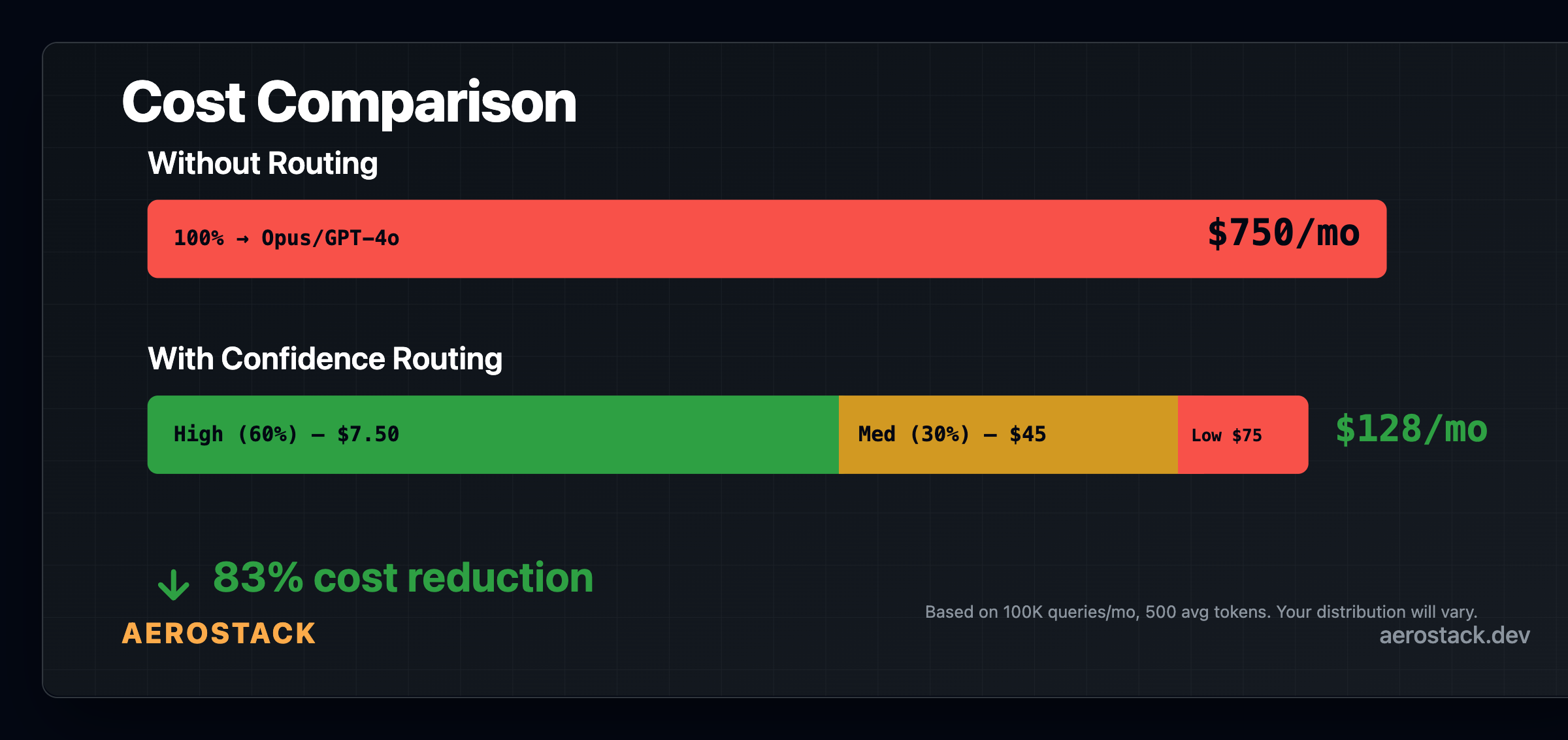

Cost Math

The savings depend on your query distribution. Here's an example using published model pricing (check Anthropic and OpenAI for current rates):

Scenario: A support bot handling 100,000 queries per month, 500 tokens average input.

Without routing: Every query goes to your most capable model at ~$15/1M input tokens.

50M input tokens × $15/1M = $750/month

With routing (assuming 60% high, 30% medium, 10% low):

60K × 500 tokens × $0.25/1M = $7.50

30K × 500 tokens × $3/1M = $45

10K × 500 tokens × $15/1M = $75

Total: ~$128/month

That's a significant reduction — but it depends entirely on your actual distribution. If your bot handles mostly technical support, the split might be 30/40/30 and savings are smaller. If it's a restaurant reservation bot, it might be 80/15/5 and savings are larger.

Test your actual traffic before committing to thresholds. The 60/30/10 split above is an illustration, not a promise.

Also factor in the classification cost — each message gets an extra LLM call for classification. At Haiku pricing (~$0.25/1M tokens), that adds roughly $12.50 to the monthly cost for 100K messages. Still a net win if your distribution skews toward simple queries.

When to Use This

Confidence routing makes sense when:

Mixed query complexity. A meaningful portion of your messages are simple enough for a cheaper model.

Cost matters. You're running enough volume that per-query savings compound.

Quality is acceptable. You've tested that your cheap model handles simple queries well enough. If users notice quality drops on routed messages, your thresholds need tuning or the cheap model isn't capable enough.

It doesn't make sense when:

All queries are complex. If your bot handles specialized technical support or legal analysis, use your best model for everything.

Volume is low. Below a few hundred messages per day, the engineering overhead of setting up routing isn't worth the savings.

Latency is critical. The classification pass adds time. For latency-sensitive applications, measure the tradeoff.

Wrapping Up the Series

This is the last post in our Week 1 series. Over seven days, we've covered:

Day 1: What Aerostack is — workspaces, workflows, bots, and the MCP configuration problem

Day 2: Building a Discord bot with MCP tools in 5 minutes

Day 3: AI-native workflow nodes — agent_loop, confidence_router, guardrail, and the rest

Day 4: Workspaces — one URL, encrypted secrets, team-level access control

Day 5: Cross-model MCP registry — one workspace serving Claude, OpenAI, and Gemini clients

Day 6: Gen 3 bots — why tool-orchestrated agents beat flowchart bots

Day 7: Confidence routing — routing by complexity to save on inference costs

If any of this solves a problem you're dealing with, try it.