Every Platform Has if/else. We Built Something Different.

Most workflow platforms treat AI as a callback. You set up the plumbing — triggers, conditions, API calls — and AI sits at the end: "here's your data, now summarize it." That works until your workflows need to think for themselves.

We built Aerostack's workflow engine around a different assumption: the LLM isn't a step in the flow. It drives the flow. That meant building node types that don't exist on traditional platforms — nodes where the AI decides the next step, where tool calls are resolved from your workspace at runtime, where execution pauses for human identity verification and resumes hours later.

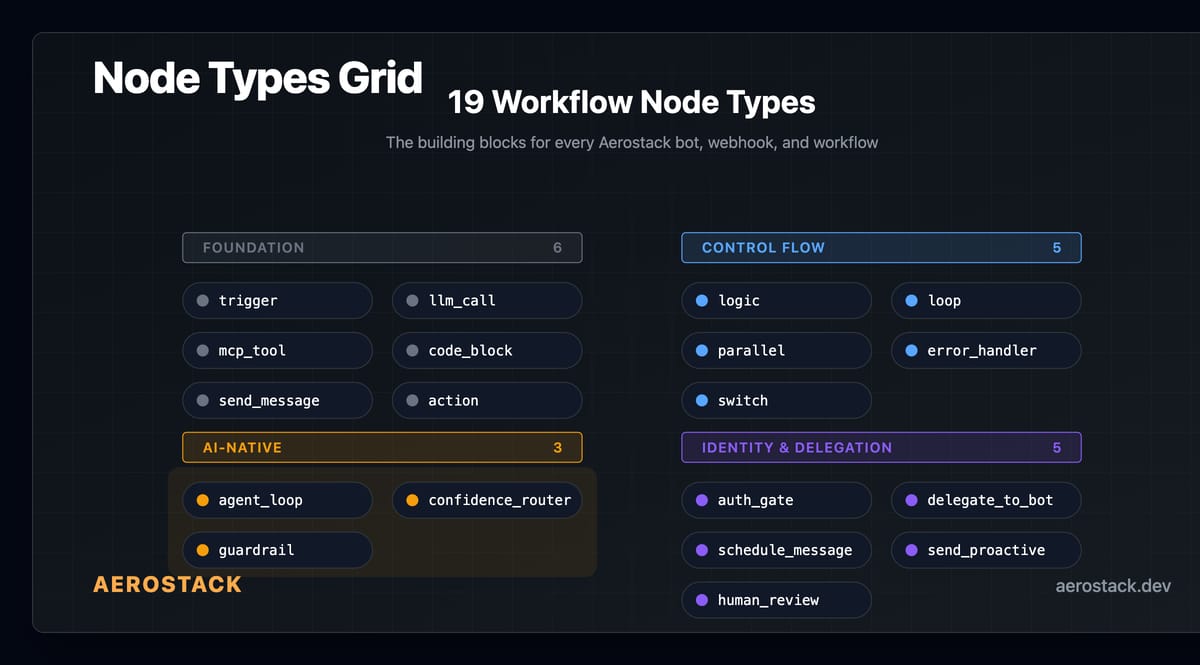

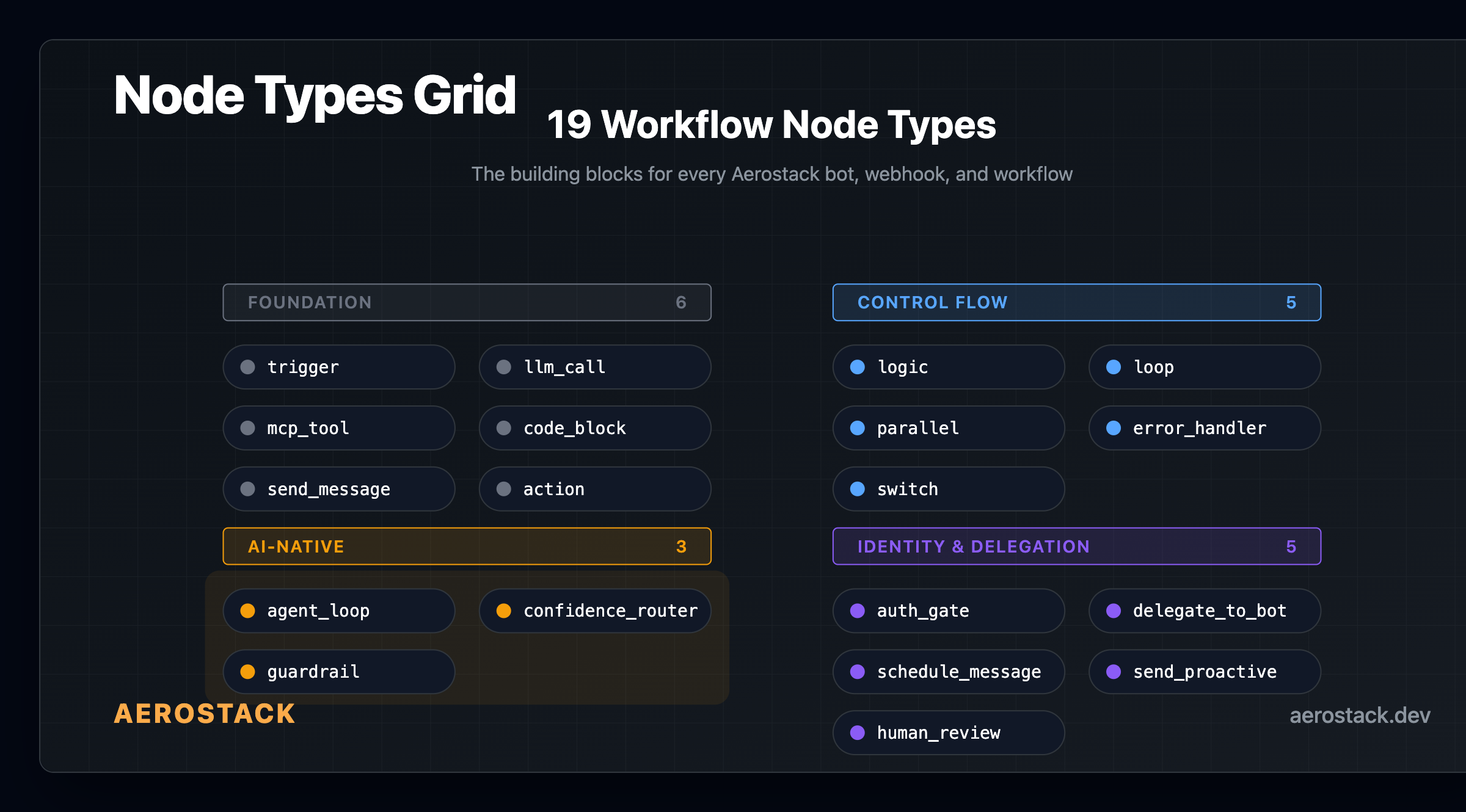

19 node types. The number isn't the point. What matters is what they do.

How Execution Works

Before the nodes: the execution model.

Every workflow run lives on a Cloudflare Durable Object. The DO maintains a queue of pending nodes, a variable store, and execution state. It dequeues a node, executes it, follows outbound edges based on the result, and queues the next nodes.

State survives restarts. If a node pauses — waiting for human input, auth verification, scheduled delivery — the DO persists everything and resumes when the signal arrives. Minutes, hours, or days later. Your workflow picks up exactly where it stopped.

There are safety limits at every layer: max nodes per run, iteration caps on loops and agent loops, timeouts on long-running nodes. These are configurable per node so you can tune the tradeoff between autonomy and resource usage.

Foundation Nodes

These exist on every workflow platform. We need them, but they're not the story.

`trigger` — entry point. Accepts webhooks, cron schedules, user messages, API calls.

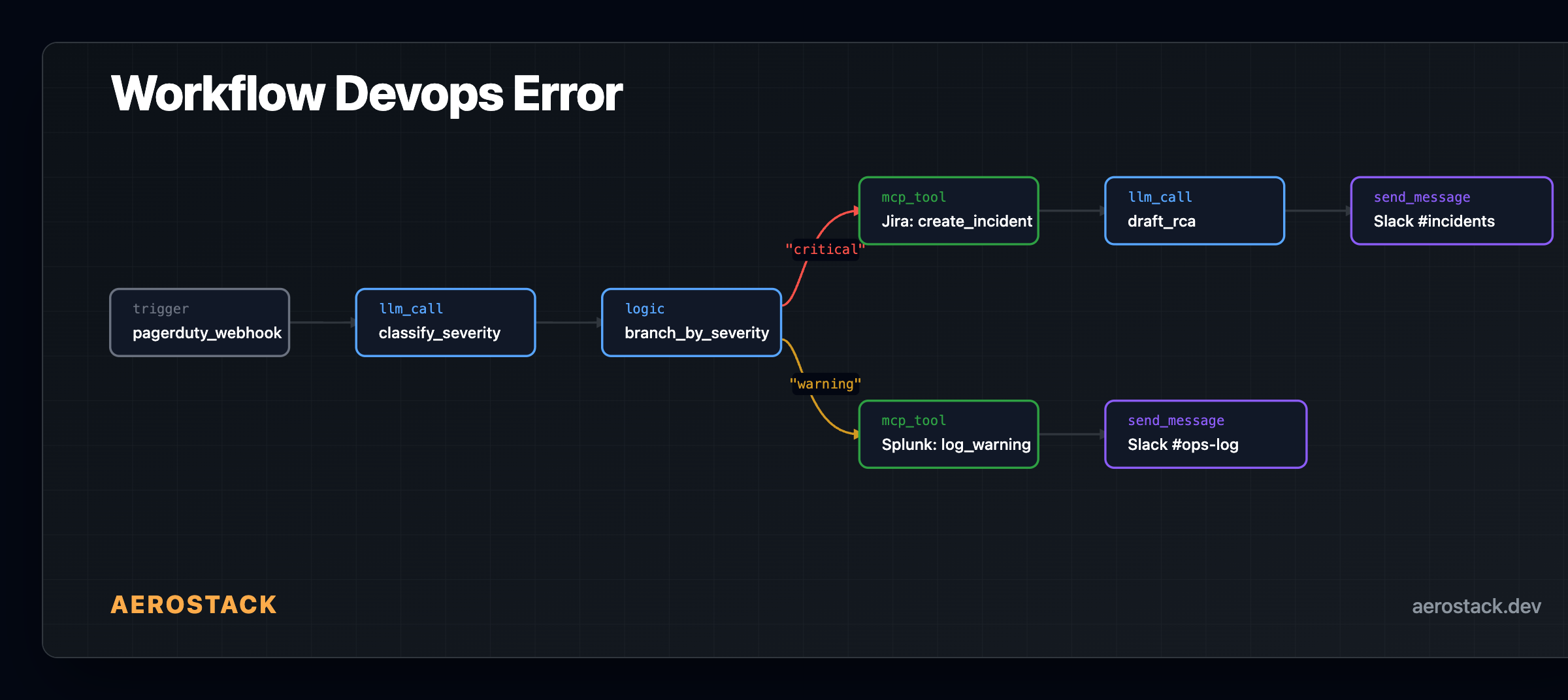

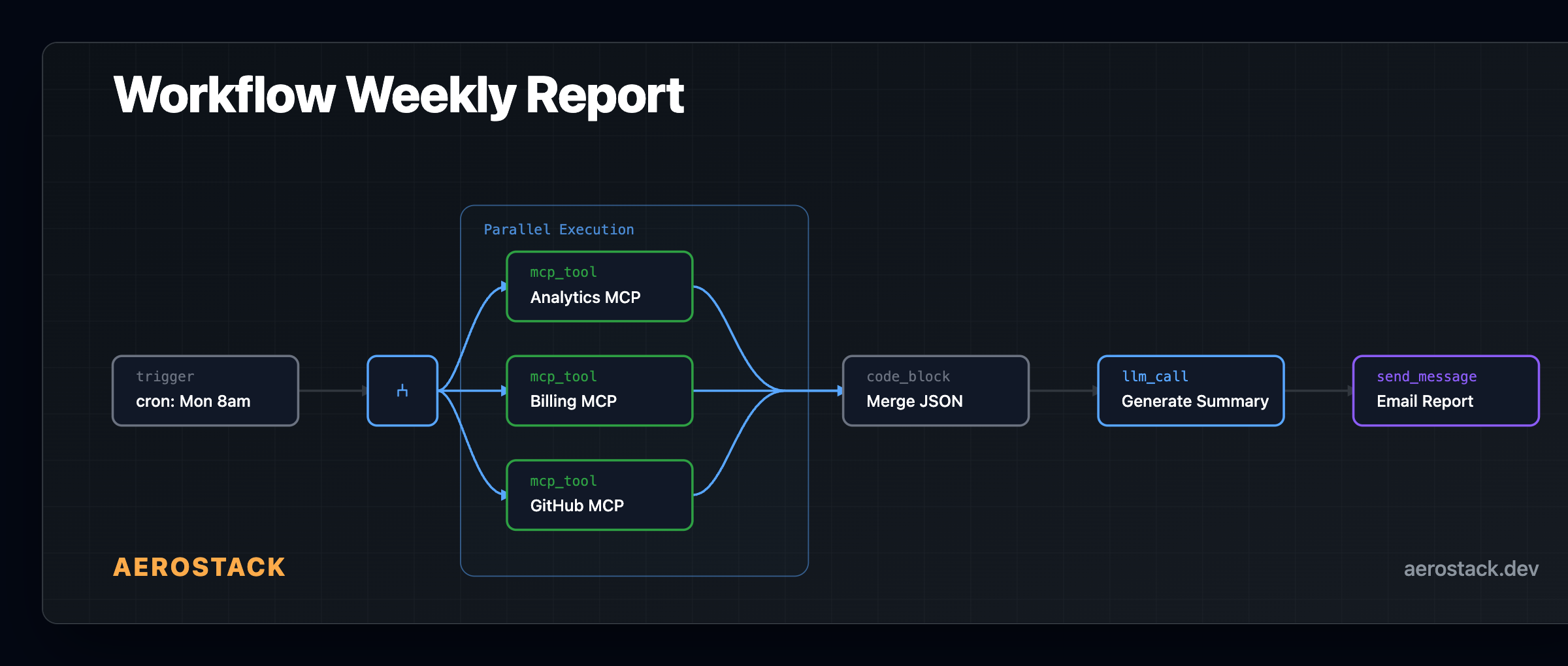

`llm_call` — sends data to an LLM, returns structured output. You define the model, prompt, and expected schema.

`mcp_tool` — calls a single tool from an MCP server in your workspace. You specify the tool name and arguments. The gateway handles credential injection — the workflow never touches raw secrets.

`code_block` — runs sandboxed JavaScript for transformations, merges, or logic that's simpler than an LLM call. You get standard string/array methods, JSON.parse/stringify, Math.*, and basic control flow. Variables you set persist to the workflow state for downstream nodes.

`send_message` — outputs to the user's platform (Discord, Slack, Telegram, WhatsApp, web).

`action` — a multi-purpose node for side effects: set_variable, end_conversation, human_handoff, http_request, create_ticket.

Control Flow

`logic` — if/else or switch branching. Conditions evaluate against workflow variables, LLM outputs, or MCP responses.

`loop` — three types:

`for_each` — iterate over an array variable.

`count` — fixed iteration count.

`while` — condition-based, re-evaluates each iteration.

All have configurable iteration caps to prevent runaway execution.

`parallel` — fan out to multiple branches and converge results. Under the hood, the Durable Object queues all branch targets and executes them within the same run. From your perspective, it looks like parallel branches that merge.

`switch` — multi-way branching on a single variable. Cleaner than chaining logic nodes when you have 3+ output paths.

`error_handler` — catches node failures and routes to recovery logic. Retry, log, escalate, or fail gracefully.

AI-Native Nodes

These are the reason we built a custom engine instead of using Temporal or Step Functions.

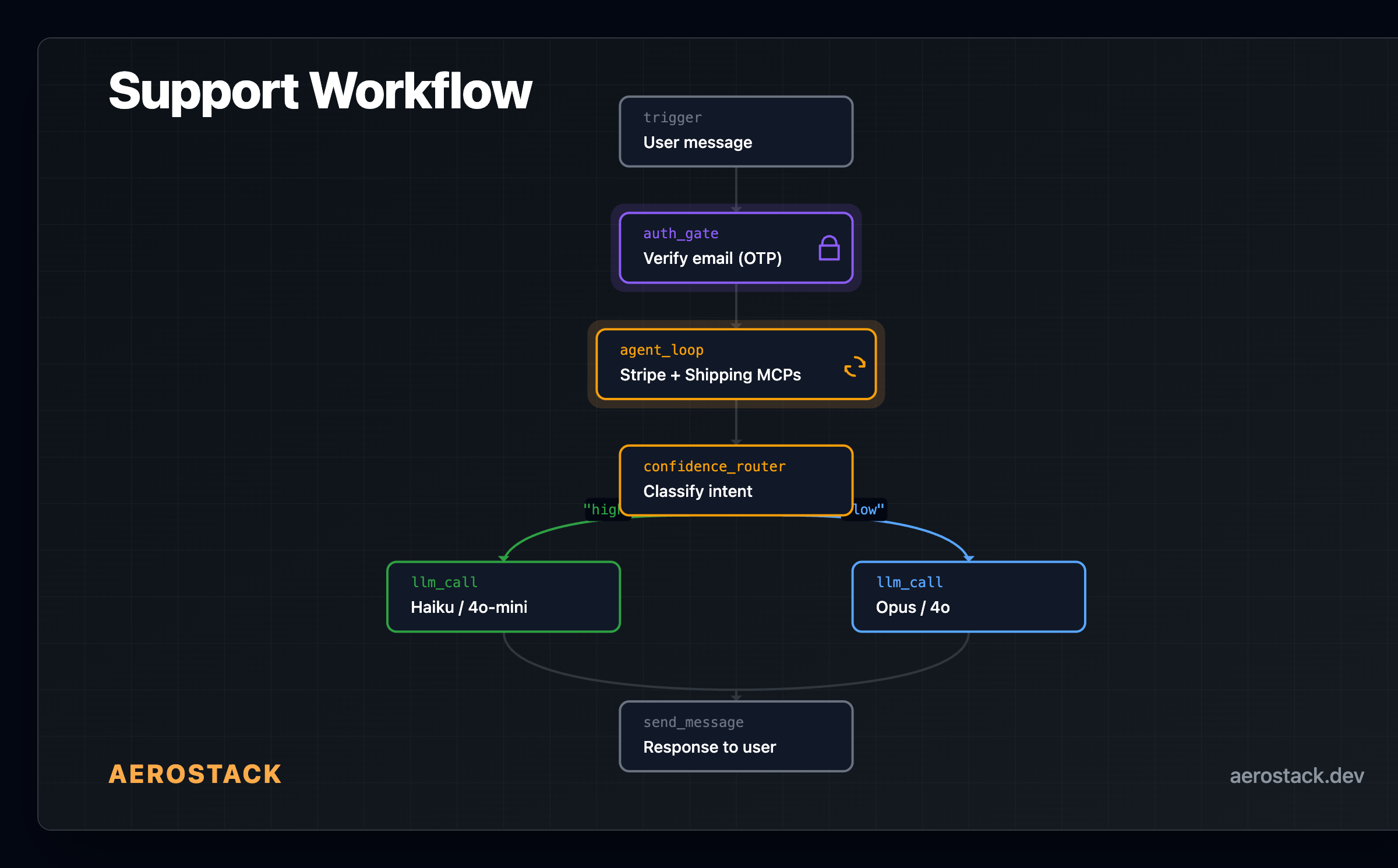

agent_loop

An autonomous ReAct cycle. You give it a goal, a system prompt, and a set of available tools. The LLM decides what to call, reads the result, and loops until it's done or hits a limit.

You can filter which tools the agent can see, set iteration limits, override the model, and adjust temperature. The LLM calls a tool, reads the result, decides if it needs another tool or if it has enough to answer. When it stops calling tools, the loop ends and the final response flows to the next node.

This was the hardest node to build. The LLM needs enough freedom to solve multi-step problems — query a database, check a different API, retry with new context — but runaway loops burn tokens and time. The configurable iteration cap and timeout give you control without being prescriptive about execution order.

confidence_router

Classifies a message into intent categories with a confidence score, then routes to different branches.

You define the categories (e.g., ["billing", "technical", "general"]) and two thresholds. The node asks an LLM to classify the message and assign a confidence score between 0 and 1. Based on the score, execution routes to one of three bands — high, medium, or low.

In practice, you wire different models or response strategies to each band. Simple FAQ lookups route to a cheap model. Complex multi-tool reasoning routes to your most capable model. Thresholds are configurable per node — tune them after watching your traffic.

guardrail

Validates LLM output before it leaves the workflow. Not a single check — it's a composable set of checks that you enable per workflow:

PII detection — regex-based, catches email, phone, SSN, credit card patterns. Zero cost.

Custom blocklist — your own regex patterns to block specific terms or formats. Zero cost.

Output length limits — truncate overly long responses. Zero cost.

Cost budget — block execution if the workflow has spent more than a threshold. Zero cost.

Prompt injection detection — heuristic pattern matching for jailbreak attempts.

Topic scope — LLM-based check that the response stays within allowed topics.

Content policy — LLM-based check for harmful content categories.

The first few checks are free (regex). The LLM-based checks cost a small number of tokens. You pick which ones matter for your use case.

If a check fails, execution routes to a blocked path where you send a safe fallback response.

Identity and Delegation

auth_gate

Multi-turn identity verification inside a workflow. This is the node that makes support workflows viable for real identity-gated operations — refunds, account changes, data access.

The node sends an OTP or magic link via email or SMS — we support Resend, Amazon SES, Twilio, MSG91, and custom HTTP providers. The workflow pauses and waits for the user to verify. Once verified, the identity is injected into workflow variables and execution continues. Downstream nodes know who the user is — an agent_loop that runs after an auth_gate can pull their order history, check their subscription, process a refund. All gated by proven identity.

If the user was already verified earlier in the conversation, the node skips re-verification and continues immediately.

delegate_to_bot

Hands off to another bot in your workspace. Your support bot encounters a billing question it's not equipped for → delegates to the billing bot, which has a different system prompt and different MCP access. The user doesn't notice the handoff.

schedule_message

Queues a message for future delivery. "Remind the user in 2 hours." "Send a follow-up email tomorrow at 9am."

human_review

Pauses workflow execution and waits for a human to approve, reject, or modify the pending action. The reviewer gets notified via their preferred channel — web dashboard, email, Slack, or Telegram. When they respond, the workflow resumes down the approved or rejected path.

send_proactive

The inverse of a trigger. Instead of waiting for a user to message you, the workflow initiates contact — pushes a notification, sends an SMS or email based on a condition or schedule.

Three Workflows, Real Node Types

DevOps Error Response:

Customer Support with Identity:

Weekly Report with Parallel Fan-out:

Design Philosophy

A platform with 100 node types — if they're just if/else/switch/delay variants — is a spreadsheet with extra steps. What matters is whether your nodes can express workflows that weren't possible before.

agent_loop means the LLM decides the execution path, not you. mcp_tool means every MCP in your workspace is a workflow step without SDK integration. confidence_router means you stop paying for expensive inference on simple lookups. auth_gate means identity is a structural property of the workflow, not a bolted-on middleware. guardrail means safety is composable and inspectable, not a comment in the prompt.

These compose. auth_gate → agent_loop = identity-verified autonomous execution. agent_loop → confidence_router = AI that routes its own output quality. parallel → code_block → llm_call = gather data from multiple sources, merge, summarize.

19 primitives that combine into workflows traditional orchestration platforms can't express.

Tomorrow: workspaces in 60 seconds — how the MCP infrastructure behind these workflows actually gets set up.